A number of tech giants - including the team behind Stephen Hawking's assistive technologies - are working to develop new motor neurone disease treatments

Intel - and the team behind late physicist Stephen Hawking's assistive technologies - has joined the Next Gen Voice project (Credit: Doug Wheller/Flickr)

There is currently no cure for motor neurone disease, a debilitating neurological condition that affects roughly 330,000 people worldwide – but AI-powered treatments have the potential to improve their quality of life significantly. Jamie Bell speaks to Rolls-Royce’s IT innovation strategist Stuart Moss about his work with the Motor Neurone Disease Association over the past 12 months.

Imagine you are doing something as simple as washing up at home, and you drop a plate – you think nothing of it, as most people would, and get on with your life.

The next day, a glass slips from your hand. You start to notice you are losing your grip and dropping things more frequently. Although you find this strange, you aren’t overly worried.

The following week, you collapse in your garden at home and decide it’s time to go to hospital.

After a week of extensive tests have ruled out every other possibility, a doctor delivers some devastating news: You have motor neurone disease (MND).

People diagnosed with MND are usually given between 18 months and five years to live – however, the process by which you lose your motor skills and become physically paralysed happens extremely quickly.

One of the last things affected by the disease is speech, which becomes slurred early on, and deteriorates until the patient can’t even form sounds, let alone take part in everyday conversations.

But working closely with the Motor Neurone Disease Association (MNDA) – a UK-based charity – Stuart Moss has set about giving people with MND their voices back using artificial intelligence.

Over the past 12 months, Moss has assembled a technology dream team featuring Dell, Microsoft, Google, Intel, Ascensia and Computacenter.

Intel’s team includes the people behind the assistive technologies used by late physicist Stephen Hawking, who lived with an early-onset, slow-progressing form of MND known as amyotrophic lateral sclerosis (ALS).

The companies have combined their resources to create Next Gen Voice, a group of three AI-based solutions to help people living with MND – from diagnosis through to losing the power of speech.

Moss says: “We want to make motor neurone disease something you live with rather than die from.

“When you have MND, eventually the only things that work are your eyes and your ears, so you are observing the world but not taking part in it, and I thought: ‘Not on my watch’.

“We’re going to fix that – your words, in the way you would say them.”

How did a Rolls-Royce IT expert end up innovating treatments for motor neurone disease?

On Christmas Day in 2014, Moss’ father passed away having lived with MND since the summer of the previous year.

Moss experienced first-hand the lack of real support for people with the disease as their ability to speak deteriorates.

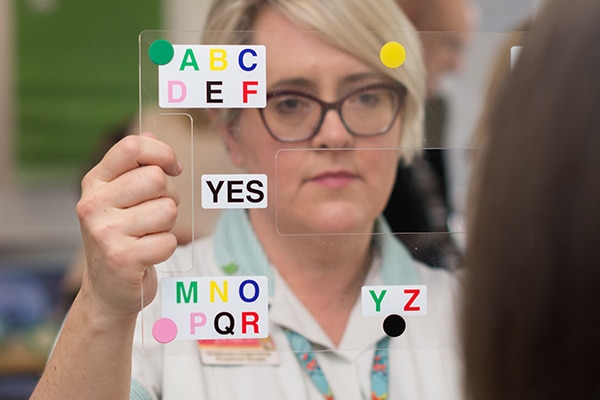

In particular, he recalls the fact that when his father was diagnosed, he was simply handed a card featuring each letter of the alphabet, and the words ‘yes’ and ‘no’ at the bottom – the main tool people with MND are given to help them communicate.

So in January 2019, when the opportunity arose to take part in a corporate social responsibility (CSR) project via his position at Rolls-Royce, he decided to do something about this.

As Moss remarks, he could have been out painting a school in Derby, where he is based, or planting trees in an effort to save the planet.

Instead, he may just have led a team to develop technologies capable of improving the quality of life for people living with MND.

Moss, who presented the project at the 2019 Dell Tech Forum in London last month, says: “I spoke to the Motor Neurone Disease Association about getting together and seeing if we could achieve something different to what already exists.

“When people ask me ‘how did you pull this off?’, the answer is: I just asked, and no one had ever asked before. That’s how it started.”

Voice banking and Google Assistant tech helping people in the earlier stages of MND

As part of the Next Gen Voice project, the MNDA has paid for Belgian software company Acapela’s text-to-speech tech, which allows users to record their voice before they begin to lose the power of speech.

The charity has made this tech available to its members for free from the moment they are diagnosed.

Dell and Computacenter have since combined to make voice banking even easier for MNDA members to access – by sending a laptop with the software already downloaded straight to their home.

Following diagnosis, the early stages of MND can be very difficult for the patient and those around them when it comes to having a conversation, according to Moss.

As their speech becomes more slurred, it is increasingly difficult for people to understand what they are saying – meaning even a brief chat can take a long time and is often uncomfortable for both parties.

After joining the project, Google re-programmed its AI-powered virtual assistant to translate the slurred speech of people in the early stages of MND – allowing carers and loved ones to understand them.

The tech giant found it can be used to decipher what someone with MND is saying, and then translate it to perfectly clear speech using artificial intelligence.

Google has agreed for this software, which it has dubbed “Euphonia”, to also be freely available. The MNDA is currently offering it to members to try out before it can become widely available.

When combined with Acapela’s banked voice recordings, this new development means the user’s slurred speech can be understood, translated and spoken aloud in their voice via a computer.

Giving a voice back to those unable to speak

The other innovation Moss and his team have been working on helps people whose speech has fully deteriorated – which he described as a “horrific situation”.

Current eye-tracking methods that involve the user staring at a screen and selecting one letter at a time to spell out what they want to say are time-consuming, and often lead to conversations becoming stilted and frustrating.

Moss discovered that giving an AI system access to someone’s text messages – a data set that is widely available on most people’s mobile phones – enables it to build up an understanding of precisely how they talk, and even their broad views on certain subjects.

Drawing on every one of these text messages, it can instantly construct sentences that replicate how the user speaks, and generate a list of options to respond with during a conversation.

The list includes answers corresponding to a range of moods – from positive, happier responses to more negative and angry options – and each is labelled with an emoticon to make this clearer.

The user also has the option to back out of this selection altogether and enter a response manually using traditional eye-tracking technology, letter by letter, if they feel none of the answers are suitable.

Moss says this new use of AI – referred to by the team as “Quips” – enables an effective two-way conversation.

Quips also has the potential to be used alongside the text-to-speech capabilities of Acapela’s voice banking software, making “your words, in your voice” possible, even in the later stages of MND.

Euphonia and Quips were demoed in October 2019, and Moss hopes that at some point in 2020 both will be available for free to anyone in the UK living with MND – the latter is due to begin being tested soon.

“We believed there should be a way to assist people with motor neurone disease, and makes their lives a lot more memorable,” Moss adds – and with these new technologies, he and his team may be on the brink of achieving this.